Data center energy consumption is increasing exponentially – and the consequences?

10. März 2020Data center energy consumption is increasing exponentially – and the consequences?

New York, March 10 2020

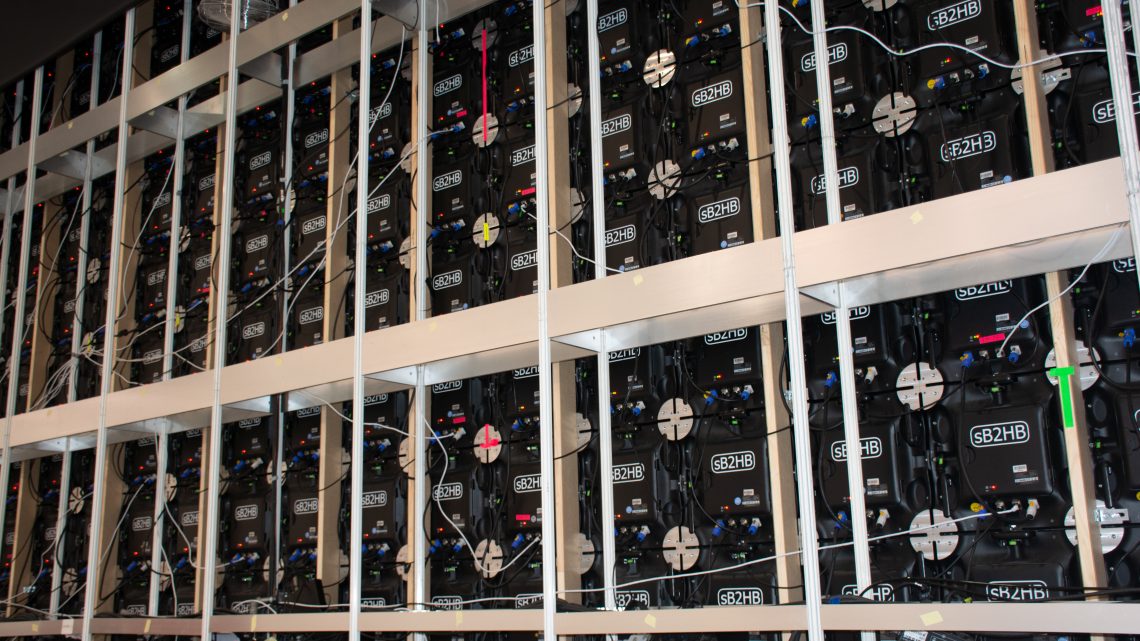

The demand for data centers has skyrocketed over the past decade to keep pace with the increasing use of application software, social media, videos and mobile apps. What are the consequences? After all, do these computer cathedrals eat electricity?

Since 2010, the number of data centers has increased more than sixfold. A recent study published in „Science“ came to the astonishing conclusion that their energy consumption has hardly changed due to enormous improvements in energy efficiency. Nonetheless, the report makes it clear that there is no guarantee that this trend can continue in light of data-hungry new technologies such as artificial intelligence and 5G.

Previous analyzes have shown that energy consumption in the data center has at least doubled in the past ten years. However, the new study points out that previous studies generally did not take improvements in energy efficiency into account. The new paper was written by leading experts from Northwestern University, the Lawrence Berkeley National Laboratory and the research company Koomey Analytics.

In 2010, data centers around the world consumed around 194 terawatt hours of energy, or 1 percent of global electricity consumption, the study said. By 2018, the computing capacity of data centers had increased sixfold, internet traffic increased tenfold and the storage capacity by a factor of 25. The study showed that the energy consumption of data centers rose by only 6 percent to 205 tWh during this time.

The difference is the result of improvements in energy efficiency since 2010. The typical computer server uses about a quarter of the energy and about a ninth of the energy to store one terabyte of data. The virtualization software that allows a machine to act as multiple computers has further improved efficiency. This also applies to the trend towards concentrating servers in „hyperscale“ cloud computing centers. Cooling systems have also become much leaner. Some technology companies submerge data centers or build them in the Arctic.

The study’s conclusions met with surprise and skepticism. Mike Demler, a semiconductor analyst at Linley Group, wants more quantitative evidence that hardware efficiency outweighs growing demand. Demler suspects that the picture in China could be different. „There is no good data from China, which is probably the fastest contributor to data center energy consumption,“ he says.

Lotfi Belkhir, an associate professor at McMaster University in Canada, who co-authored a previous paper that predicted an approximately 10-fold increase in the share of global greenhouse gas emissions from data centers by 2040 does not expect a linear increase in energy efficiency. For one thing, the chip industry is now pushing the limits of Moore’s law. On the other hand, he argues, the science paper does not take into account the rise of cryptocurrencies and blockchains, which are very energy-intensive.

The authors of the science paper themselves say that their conclusions would not mean that the data center’s energy consumption would remain that way. Demand is likely to rise significantly in the coming years due to the introduction of new technologies such as AI and autonomous vehicles.

AI is likely to spread to all areas of industry, but it is difficult to predict the future impact on energy. Modern machine learning programs are computationally intensive, but efforts are being made to develop more efficient AI chip designs and algorithms, and to use more efficient, specialized chips that run on “edge” devices such as smartphones and sensors. In addition, the use of new, extremely energy-hungry technologies such as quantum computing is imminent.